Well, among other things…

Entropy is a measure of the “degrees of freedom” available to a system. Generally-speaking, the more components, sub-systems, artefacts, entities that compose a system – the more degrees of freedom that are available to that system. Because there are always more possible disordered system states than ordered ones (in the total state space of all possible states), systems tend to probabilistically drift into disordered states. Entropy is a measure of the openness of a system to future states; thermodynamic drift into low-energy configurations of maximal disorder and randomness are a consequence of the impressive economy of energy found in nature.

Self-propagating, self-replicating and self-organising systems buck the trend of dissolution into disorder but they do it in a very mischievous way. The entropy still exists, but it is as information entropy and the randomness of thermodynamic equilibrium is offset (if temporarily) by the assertion of an abstract or logical depth. Not dissimilar to imaginary numbers in the complex plane, this is a depth in some sense perpendicular to material complexity and disorder.

2 replies on “What is Entropy?”

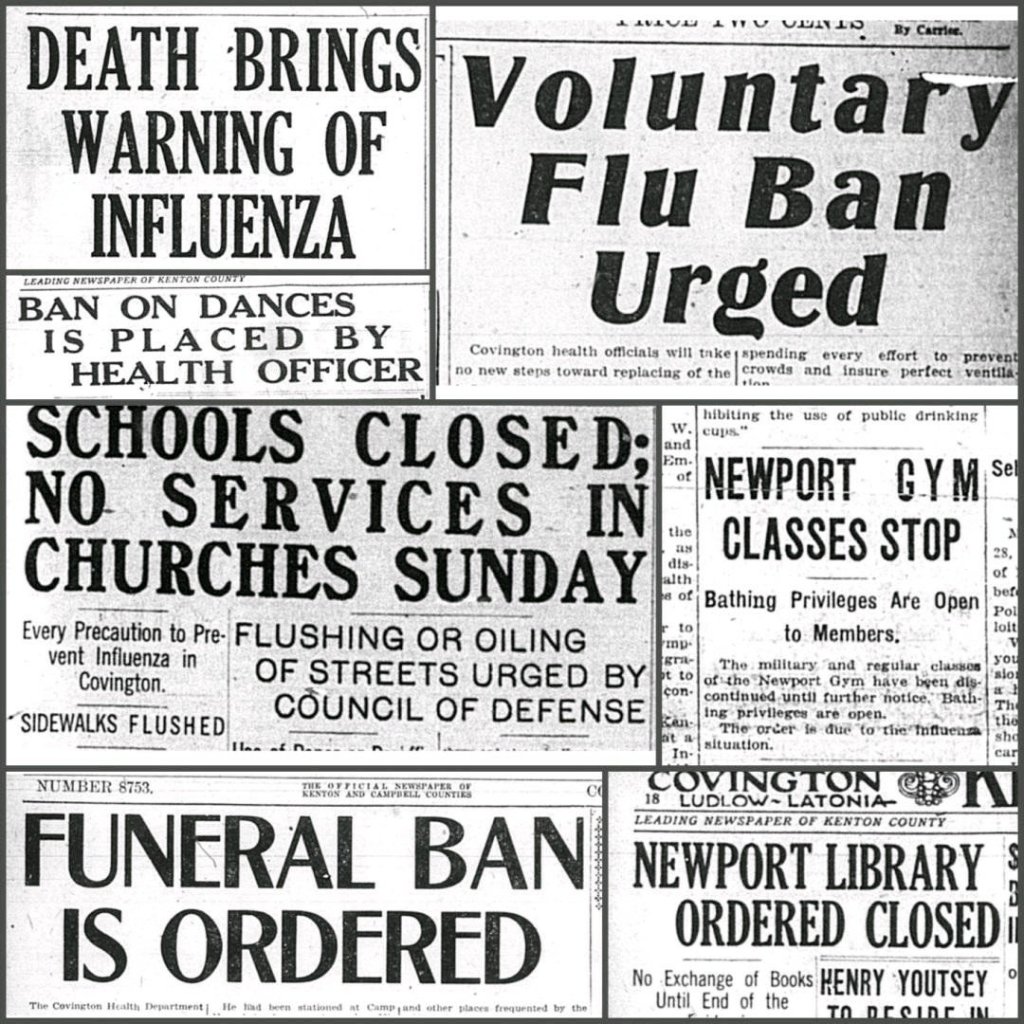

Well put and the front pages of newspapers looked like they could have been last week’s or last month’s if not for the date printed fuzzily. What interests me about entropy is how everything from molecules on up to humans, solar systems, and galaxies struggle to defy entropy. I’ve become interested in viruses lately which are basically pre-living or parasitically living protein molecules capable of self-assembly, motility, and seemingly purposeful molecular-mechanical functions, fueling its operations with energy available to it, while taking advantage of the instability of RNA genomes to avoid the more-or-less static defenses of our immune systems. All this, without brains — just a demiurge to continue existing. 🙂

LikeLiked by 1 person

Entropy is simultaneously the possibility (or probability) of disassembly *and* the aperture of opportunity through which living systems and intelligence emerge and self-propagate; errors in information coding are as vital as are accurate transcriptions, replications, representations. It is an information war – genetic, among other things – but entropy is also the source and font of all information.

Entropy does not dissolve information: it generates it. Information is entropy’s shadow.

LikeLike