Can algorithms successfully encode for the stochastic properties and endemic entropy that provides sentience with evolutionary intelligence and a bias towards novelty and (information) entropy? There are logical (and philosophical) limits to algorithmic compression or optimisation that biology has nevertheless successfully exploited, not by removing errors but – from genetics to intelligence – by capitalising on them.

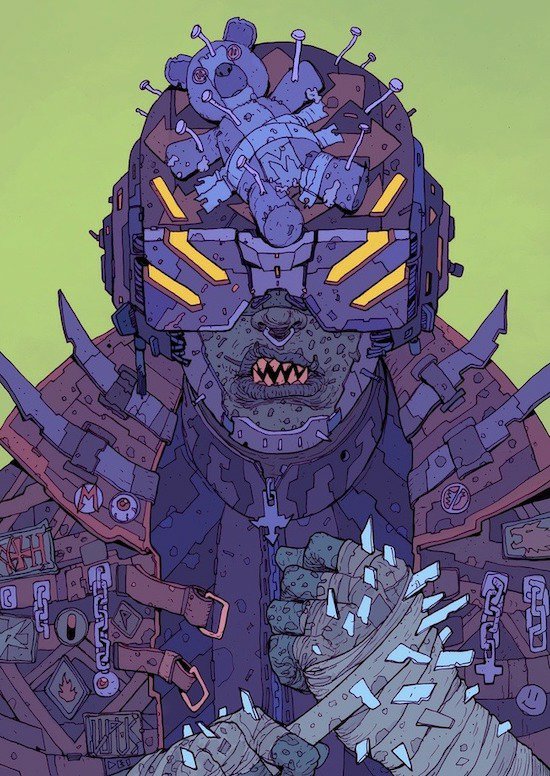

Machines will undoubtedly win this competition: their economies of scale and productivity naturally bias us towards their necessary ascendance. Something will be lost along the way, a subtly self-inflected logical bootstrap that (so far) only exists in the organic sentience of minds and brains. It will be lost because the rise of an algorithmic conceptual vocabulary, metric and value-system has simultaneously seen the reflexive diminution of how we define and understand ourselves.

We find ourselves enthusiastically pursuing the meteoric rise of specialised computational artefacts which are utterly hollow of substantive introspection and qualitative experience. We now measure ourselves by these mechanisms and longer-term, we shall quite possibly lose a little of our own beautifully flawed humanity along the way.

Context: At the limits of thought

One reply on “The Limits of Thought: Algorithms, Brains and our Fragile Humanity”

You write “There are logical (and philosophical) limits to algorithmic compression or optimization that biology has nevertheless successfully exploited, not by removing errors but – from genetics to intelligence – by capitalizing on them.” There is a form of logical algorithm based on Fuzzy Logic, that capitalizes on inaccuracies to gain time advantages. It is used in military applications as well as others. FL would solve “the square root of 65” as “8.something”. Almost does not only count in horseshoes and hand grenades. 😉

LikeLiked by 1 person