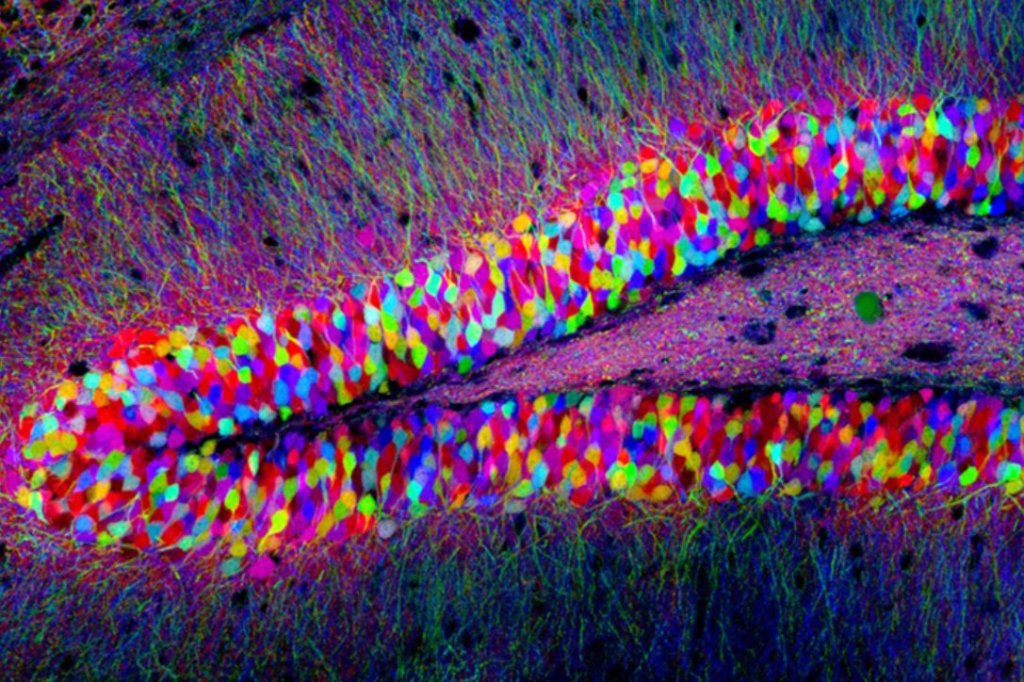

Some fascinating video lectures from Jeff Lichtman of Harvard University on the topic of connectomics are linked below. The reflections on technological aspirations to machine intelligence are implicit.

Connectomics: seeking neural circuit motifs

• Brains are initially maximally interconnected as wired networks and it is the imprinting of experience that prunes this connectivity to more sparse interconnected networks.

• Axons are competitive and there is symmetry (as logical bias) to the graph structure of their interconnectivity.

• The complexity of biological neural networks is many orders of magnitude beyond even the most sophisticated artificial neural networks.

Extrapolating from mice to human beings is always interesting. Recent discoveries of the energy efficiency of our own neurons suggest not so much (a) human exceptionalism or difference of kind so much as of structural and functional degree.

Striking Difference Discovered Between Neurons of Humans and Other Mammals

There is something unique about human brains but it may represent a refinement that stands much to the sparse developmental connectome just as does that experientially-pruned connectome to the maximally interconnected state of the infant brain.

What is the damping mechanism? Are brains necessarily and adaptively reflexive under developmental experience (as data) imprints into (and as) their network architectures? This suggests that network architecture is less transmission medium, more manifest record of data flows. (Think here of a river delta that adaptively shapes itself around the flow of water and silt.) Should we be rethinking the relationship of phenomenological data to the connectome that it shapes?