Australia is now so thoroughly wired into digital systems that cyber insecurity has become an ordinary cost of institutional existence and everyday subjectivity, not an abnormal failure skulking out beyond the perimeter.

The Australian Signals Directorate received more than 84,700 cybercrime reports in 2024–25, roughly one every six minutes; average self-reported losses rose to $33,000 for individuals and more than $80,000 for businesses. Gartner forecasts Australian information-security spending above AU$7.5 billion in 2026, while IBM places the global average cost of a data breach at US$4.44 million. These numbers are useful, but they are not sacred. They measure scale, not mastery.

The cybersecurity sector exists because the threat is real. Hospitals, ports, insurers, universities, telecommunications firms, government agencies, logistics chains, small businesses, and private citizens all sit inside the same expanding technical field. The attacks are not imaginary. The damage is not metaphorical. But the more revealing fact is that cybersecurity has become a permanent economic layer wrapped around systems that cannot, by their own nature, be permanently secured.

The technical fact is blunt: closure is impossible. Networked systems cannot be sealed because their usefulness depends upon exchange, access, update, authentication, interoperability, remote administration, vendor support, data flow, and human participation, which means judgement, fatigue, ambiguity, habit, trust, and error are not external defects but part of the operating field. Every connection that creates value also creates exposure. Every new security layer adds another dependency. Every dependency expands the attack surface: more code, more configuration, more vendors, more credentials, more interfaces, more places where trust must be assumed and can be abused.

This is not merely planned obsolescence with better typography, though there is plenty of that wandering around the convention centre carrying branded tote bags. The deeper issue is logical. Turing’s halting problem showed that there can be no universal procedure capable of examining every possible program and determining in advance whether it will terminate cleanly or continue indefinitely. Gödel opened a corresponding fracture in formal logic itself: any sufficiently expressive rule system will contain truths that cannot be fully proven from within the system’s own rules. Put plainly, the machine cannot completely certify itself from inside itself.

Cybersecurity lives inside that fracture. A sufficiently complex digital system cannot fully know, predict, model, or secure every possible future behaviour of itself, its users, its updates, its supply chains, its vendors, its attackers, or the recursive interactions between them. It can test, monitor, patch, harden, simulate, isolate, and reduce exposure. It can buy time. But it cannot achieve final closure because the uncertainty is structural, not accidental. The incompleteness is woven through the conditions that make the system functional.

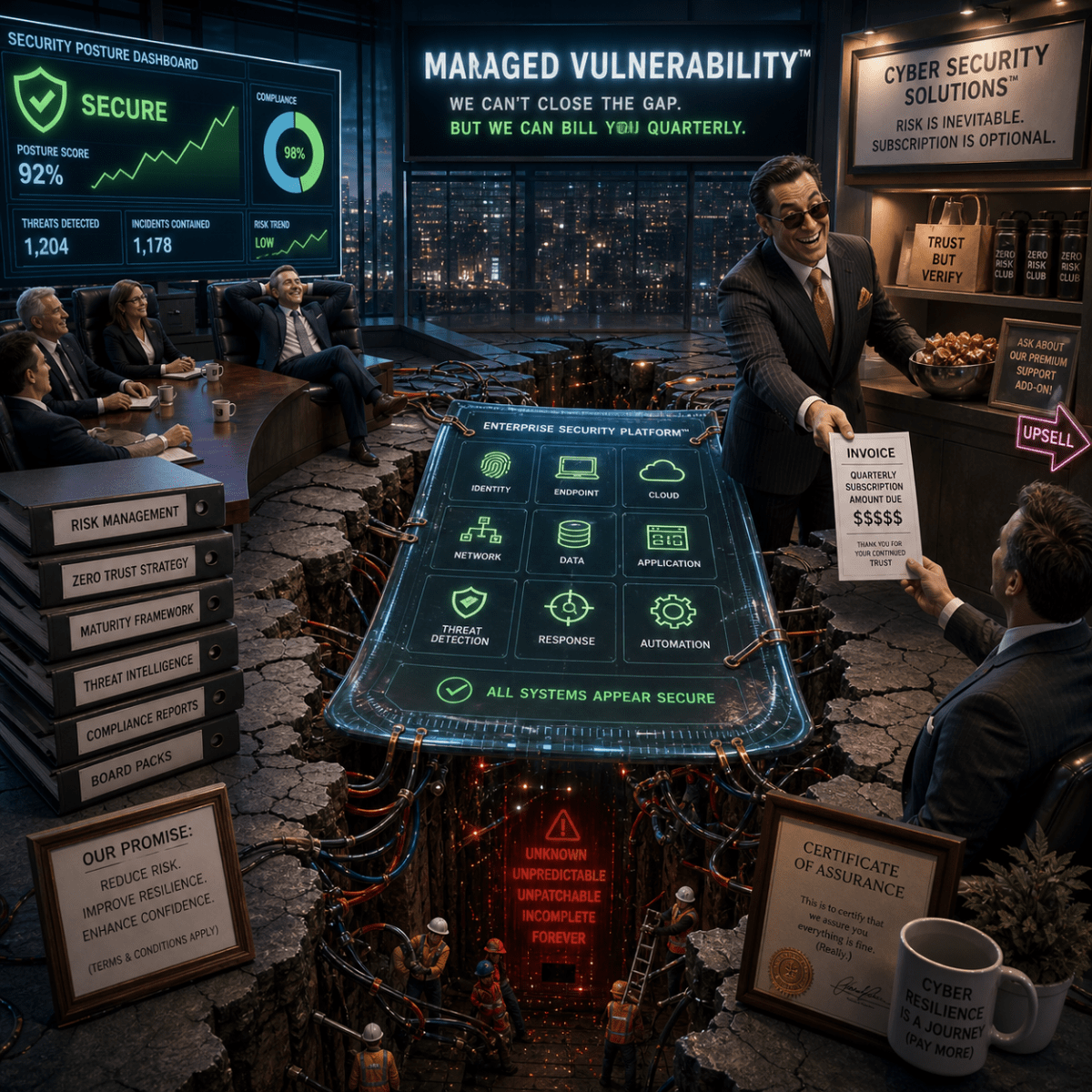

Locally, the system appears coherent. A dashboard glows green. Audit controls pass. Compliance boxes are ticked. The quarterly report arrives wrapped in enough graphs to tranquillise a board meeting. But networked systems behave less like closed machines than manifolds: locally legible, globally unresolved. Inside one coordinate patch, everything appears governed. Across the whole surface, insecurity continues propagating through unseen dependencies, delayed interactions, forgotten integrations, inherited assumptions, exhausted workers, and software stacks piled atop software stacks like geological layers of compressed managerial optimism.

The map does not unproblematically master or accurately survey the whole territory; it becomes another moving part within it.

This is where the managerial mythology begins. Executives are sold maturity frameworks, zero-trust architectures, behavioural analytics, threat intelligence feeds, automated response tooling, compliance matrices, posture dashboards, and cyber insurance. Some of these things are useful. Some are expensive theatre designed to convert uncertainty into procurement language. The problem begins when temporary risk modulation is mistaken for impermeability, when resilience is sold as lockdown, when “secure” becomes a narcotic adjective sprayed across a permanently unfinished technical landscape.

The most profitable move is not simply reducing insecurity, but obscuring its irreducibility. The sector cannot comfortably announce that no purchase will finally close the system, no architecture will eliminate exposure, and no amount of spending will abolish breach entirely. So insecurity is translated into posture scores, uplift programs, maturity curves, assurance frameworks, and strategic transformation roadmaps. The hole remains exactly where it was, but it is surrounded by enough process language to appear governable.

For individuals, managed vulnerability becomes visceral. Ordinary people now maintain miniature domestic security infrastructures merely to participate in everyday life with slightly reduced exposure to fraud, surveillance, identity theft, scams, credential leakage, behavioural profiling, and financial predation. Password managers, VPNs, two-factor authentication apps, encrypted storage, secure backups, breach alerts, identity monitoring subscriptions: the average citizen is quietly transformed into a part-time perimeter-security administrator for a network they neither control nor meaningfully understand.

And none of these protective layers stand outside the problem they claim to solve. The password vault can be breached. The privacy service can leak. The authentication provider can fail. The defensive apparatus is itself networked, monetised, patched, exposed, and incomplete. The user pays to reduce vulnerability through systems that are themselves vulnerable. The farce is not subtle. It is just normalised.

Communication systems reproduce dissonance because dissonance is not merely noise attacking order from outside. It is part of how complex systems adapt, differentiate, learn, and persist across time. Communication is not the transfer of static messages between sealed entities. It is a self-sustaining cascade of signal, delay, correction, recurrence, amplification, and disturbance. Volatility is not a temporary flaw waiting for sufficient technological maturity to remove it. Volatility is bound into the exchange itself.

A perfectly closed communication system would cease to communicate. A perfectly secure network would cease to function as a network in any socially useful sense. The fantasy of total security is therefore the fantasy of preserving openness while abolishing the uncertainty openness creates. Good luck with that. Bring a procurement team and a wellness officer.

The farce is not that cybersecurity is fake. The threats are real enough to collapse hospitals, freeze logistics, drain bank accounts, expose governments, and ruin lives. The farce is that an enormous commercial ecosystem now depends upon obscuring the impossibility of final closure while feeding on the consequences of that impossibility. The machine cannot finally secure itself, so the user must subscribe harder. The enterprise cannot eliminate exposure, so the board must acquire another platform. The logical fracture cannot close, so the market builds another glossy interface over the crack, then sends the invoice quarterly.

Security is temporary. All of it. That is not nihilism. It is the honest beginning from which a sane technological culture would proceed. Cybersecurity should be sold as time, not salvation: time to detect, time to respond, time to recover, time to preserve coherence under conditions of unavoidable exposure. Anything beyond that drifts into mythology. And mythology, as usual, auto-renews annually.

Categories

Managed Vulnerability: The Cybersecurity Sector